Assessing the Evidence Series

By Melissa Brouwers, PhD

Introduction

Practice guidelines are a terrific idea. They provide recommendations, based on evidence and interpretation and contextualization of that evidence, and can influence clinical decisions, clinical policy, and health system structures, organization, and function. Clinical practice guideline is the term most commonly used for those documents that advise on clinical actions and health system guidance is used to advise on system-related issues. Practice guidelines have been linked to changes in processes of care, improvements in clinical outcomes, and access to care options.

AGREE

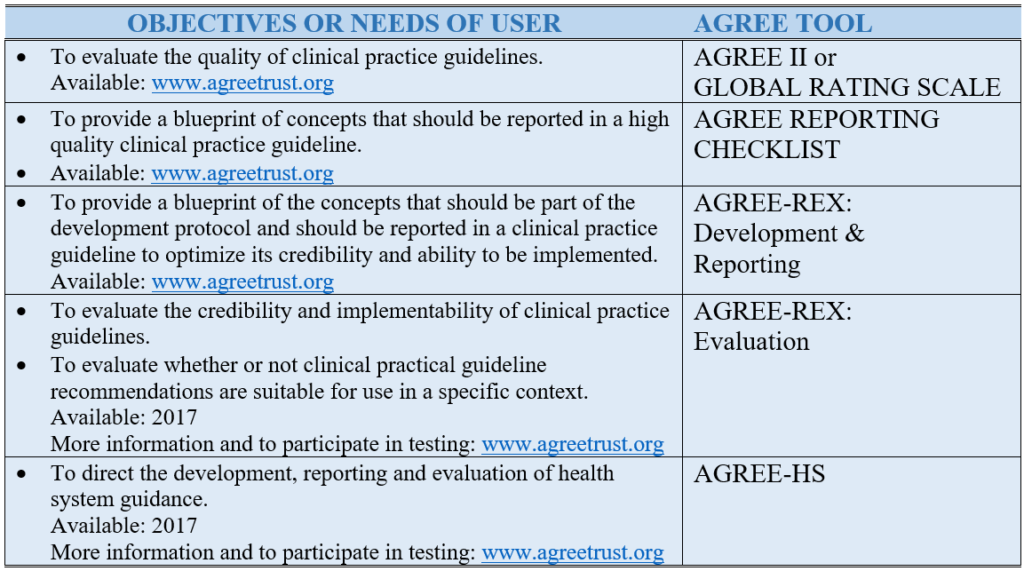

However, just as clinical trials, systematic reviews, and other studies can vary in quality, so too can there be variation in the quality of practice guidelines. Poor quality practice guidelines can be biased or unclear; result in recommendations that do not reflect the most beneficial and safe option; or result in harmful options being recommended. Led by a team of international practice guideline scientists, developers, implementers and users, the AGREE (Appraisal of Guidelines REsearch and Evaluation) program of research emerged as a response to these problems. Throughout its almost 20-year history, the overall aim of the AGREE community has remained the same: to improve the quality of patient care and health system performance through advances in the science and application of practice guidelines. This aim is achieved by creating practice guideline evaluation tools that can also serve as blueprints to direct practice guideline development and reporting (see Table 1).

Table 1. AGREE Tools to Meet Users’ Needs and Objectives.

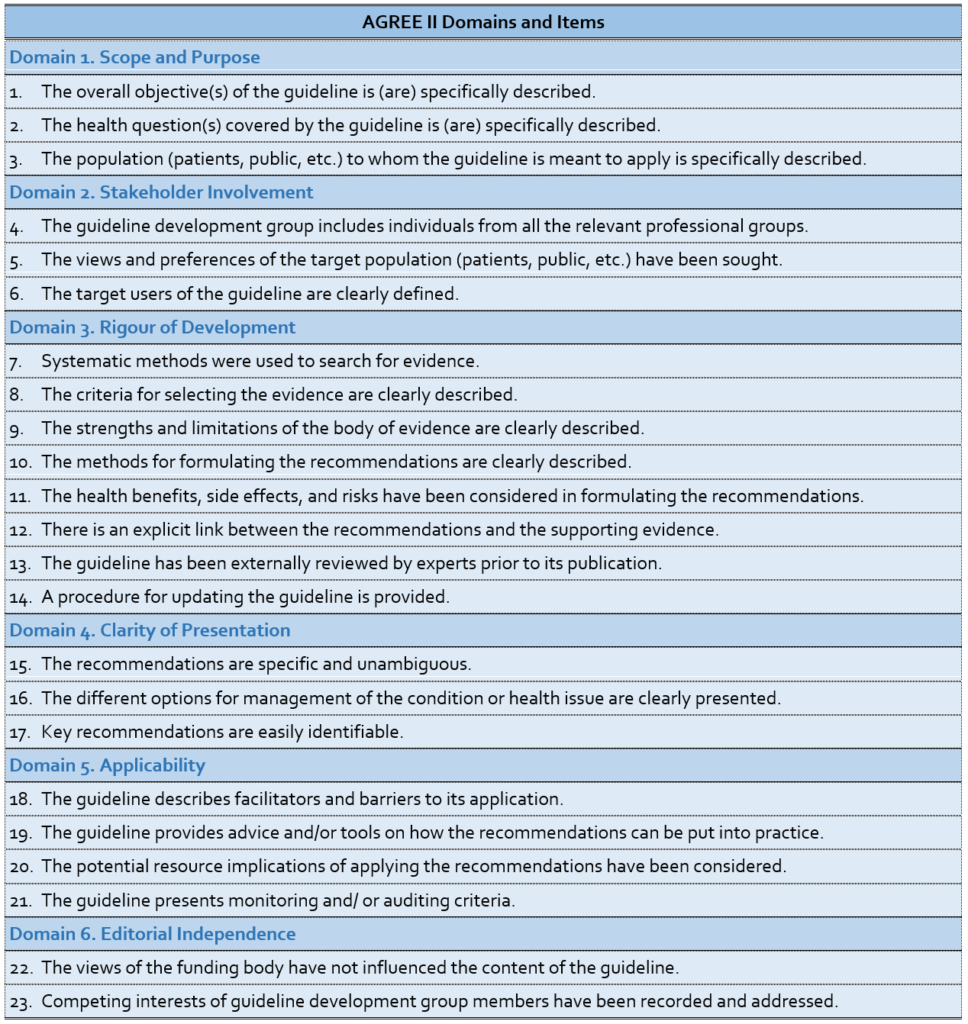

The AGREE II (a revised version of the original AGREE Instrument) is the most established and well known of the tools and is relevant to clinical practice guidelines. It is a reliable and valid instrument comprised of six domains and 23 items that emerged from the literature and the testing by, and with consensus among, the international practice guideline community (see Table 2). It is translated into dozens of languages and is supported by randomized trial tested on-line training platforms. The Global Rating Scale (soon to be rebranded as AGREE Lite) is an abbreviated version of the AGREE II and useful for rapid assessments. In 2016, the AGREE Reporting Checklist was released – a version of the AGREE II that includes its items and concepts – but in a reporting checklist format to help practice guideline developers create their protocols and ensure all the appropriate information is reported in their final documents.

Table 2. The AGREE II Domains and Items

The AGREE II considers the whole practice guideline process. However, practice guidelines produced in a methodologically sound manner still run the risk of producing recommendations that might not be clinically relevant or implementable for a specific context. This has led the team to develop a complement to the AGREE II, the AGREE-REX (Recommendations EXcellence), a practice guideline tool that specifically targets recommendations. Two versions of the AGREE-REX are being developed. The evaluation version of the AGREE-REX allows users to assess the credibility and implementability of the recommendations and to assess the suitability of specific recommendations for application in a particular context. The latter use is to promote practice guideline endorsement and adaptation and to reduce the duplication of effort across developer groups. The development/reporting version of the AGREE-REX provides users with a blue print for how best to optimize their recommendations. The AGREE-REX is scheduled for release in 2017 after testing is complete.

Finally, the AGREE team recognizes that clinical care is provided in a larger health system and that the system must be designed, organized, and resourced in a manner to enable effective health options to be provided. This has led to another project, this one aimed to support development, reporting, and evaluation of health system guidance, the AGREE-HS (Health System). Emerging from the AGREE team and with participation and representation from each of the World Health Organization regions, the AGREE-HS is also anticipated for release in 2017.

Philosophy of AGREE

In addition to its well-known evaluation purposes, the philosophy of the AGREE products is to also serve as development and reporting blueprints. It is important to distinguish the role of blueprints from specific operational methods. This allows flexibility for the AGREE tools to identify, in the supporting documentation, specific methods that do a good job of operationalizing each of the instruments’ important concepts. For example, the AGREE II tool and AGREE Reporting Checklist point to components of the GRADE methodology as good examples of how to achieve success with some of the underlying concepts related to evidence (see August 2016 Growth Commentary); similarly, the AGREE-REX points to the Evidence to Decision framework (see September 2016 GROWTH commentary). As methods evolve and change over time, and new methods emerge, so too will the supporting documentation of the AGREE.

The AGREE tools and resources are popular. They have served as the foundation for many development and evaluation protocols for established groups such as Cancer Care Ontario (Ontario, Canada), the National Institute for Health and Care Excellence (UK), and the Institute of Medicine’s Trustworthy Guidelines initiative. Together, the AGREE II, AGREE Reporting Checklist, AGREE-REX, and AGREE-HS provide a suite of rigorous, yet practical, resources to optimize development, reporting, and evaluation in the practice guideline enterprise.

Resources and links

AGREE Research Trust: for all things AGREE-related.Key AGREE II Papers (including Global Rating Scale).

- Brouwers MC, Kho ME, Browman GP, Burgers J, Cluzeau F, Feder G, Fervers B, Graham ID, Grimshaw J, Hanna S, Littlejohns P, Makarski J, Zitzelsberger L on behalf of the AGREE Next Steps Consortium. AGREE II: Advancing guideline development, reporting and evaluation in healthcare. Can Med Assoc J. 2010,182:E839-842; doi:10.1503/cmaj.090449

- Brouwers MC, Kho ME, Browman GP, Burgers JS, Cluzeau F, Feder G, et al. Development of the AGREE II, part 1: performance, usefulness and areas for improvement. Can Med Assoc J. 2010;182(10):1045-52.

- Brouwers MC, Kho ME, Browman GP, Burgers JS, Cluzeau F, Feder G, et al. Development of the AGREE II, part 2: assessment of validity of items and tools to support application. Can Med Assoc J. 2010;182(10):E472-E8

- Brouwers MC, Kho ME, Browman GP, Burgers JS, Cluzeau F, Feder G, et al. The Global Rating Scale complements the AGREE II in advancing the quality of practice guidelines, J Clin Epidemiol. 2012;65(5):526-34.

Key AGREE Reporting Checklist Paper.

- Brouwers MC, Kerkvliet K, Spithoff K, Consortium ANS. The AGREE Reporting Checklist: a tool to improve reporting of clinical practice guidelines. BMJ. 2016;352.

Key foundation paper for AGREE-REX.

- Brouwers M, Makarski J, Kastner M, Hayden L, Bhattacharyya O, the GUIDE-M Research Team. The Guideline Implementability Decision Excellence Model (GUIDE-M): a mixed methods approach to create an international resource to advance the practice guideline field. Implement Sci. 2015;10(1):36.

Key AGREE-HS Paper

- Ako-Arrey DE, Brouwers MC, Lavis JN, Giacomini MK, Team AH. Health system guidance appraisal-concept evaluation and usability testing. Implement Sci. 2016;11.

About the author:

https://fhs.mcmaster.ca/oncology/faculty/melissabrouwers.html