By Craig Whittington, PhD and

Jacob Franek, MHSc

Introduction

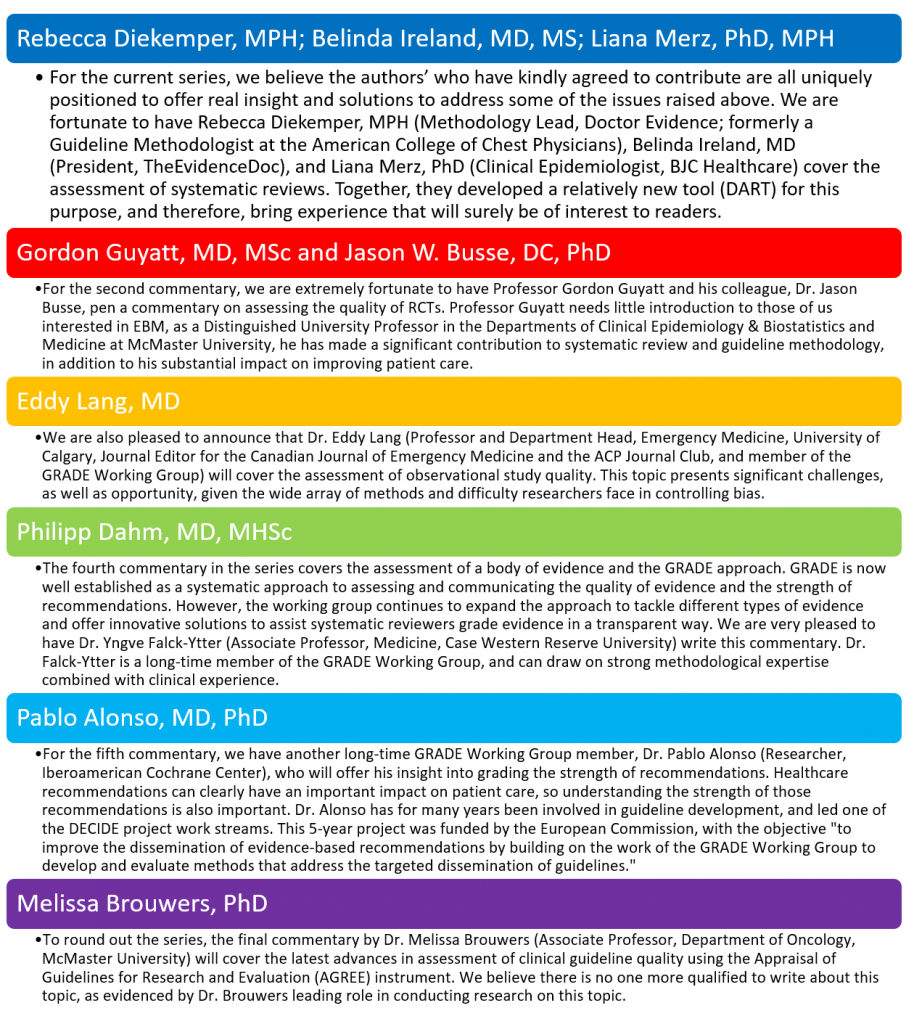

GROWTH commentaries started in November 2014, and have covered a broad range of topics relevant to those interested in evidence-based medicine (EBM). We now have great pleasure to introduce a series of commentaries covering a specific theme: Assessing the Evidence. This 6-part series progresses from assessing the quality of RCTs all the way through to assessing the quality of guidelines. Making judgments about evidence and recommendations in healthcare is complex. Often, there is insufficient information to make a well-informed inference from evidence, but in most cases, inferences must be made and guidance provided. Systematic reviewers and others who use evidence must therefore make judgments about the quality or certainty in the evidence. And these judgments ultimately guide important healthcare decisions or recommendations.

Critical to making judgments is the use of a systematic and explicit approach to guide the process by which judgments will be made. The lack of such an approach creates greater probability for errors in judgment, hinders the critical appraisal of judgments and prevents the external communication of judgments including rationales for decisions or recommendations reliant on these judgments.

Evidence Quality

There are many different systems for assessing the quality of evidence, making the choice complicated. Terminology differences further complicate the matter. Terms such as “quality,” “validity,” and “bias” have all been used to reflect similar or even dissimilar quality concepts. Here, we will use risk of bias to mean the likelihood that a study is at risk of a systematic deviation from the truth (i.e., the over- or under-estimation of a true treatment effect) due to flaws in the design and execution of a study1. This is related to, but distinct from, the definition of quality of evidence employed by the GRADE Working Group2 and hereafter defined as the certainty in the estimates of the treatment effect, which refers not only to risk of bias, but also to issues of precision, consistency, and directness across a body of evidence3. The differences in these definitions help to highlight fundamental differences in approaches to assessing the quality of evidence. Assessments of risk of bias using, for example, Cochrane’s Risk of Bias tool, help reviewers to determine whether the results of an included study should be believed. Assessments of the quality of evidence, using the GRADE approach for example, help reviewers to determine how confident they can be in effect estimates from a body of evidence.

Beyond the level at which quality is being judged—at the individual study level or across studies—are considerations concerning the type of research question and the study designs relevant to a review. Different study designs, namely the distinction between randomized and non-randomized studies, by nature require different approaches for assessing quality or risk of bias (as an example, look at the contrast between Cochrane’s tools for assessing risk of bias in randomized4 versus non-randomized5 studies). There are even unique approaches for assessing the quality of research that synthesizes evidence such as systematic reviews and evidence-based guidelines and approaches for grading the strength of recommendations that result from guidelines.

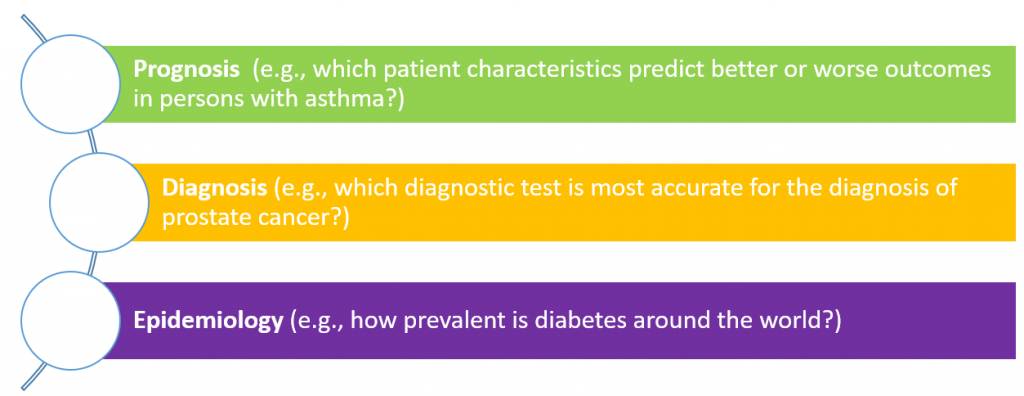

Most well developed is the assessment of individual studies or reviews addressing questions of comparative efficacy or safety. For example, does the addition of an ACE inhibitor to a beta-blocker do more good than harm compared to a beta-blocker alone for the treatment of chronic heart failure? However, there are other types of research questions that are necessary to ask in order to better understand health and the healthcare systems, and these types of questions rely on different study designs with different study objectives and ultimately require different quality evaluation tools. Common question types include:

While tools do exist for evaluating risk of bias and quality for these other question types, these tools are more difficult to use and are lesser known to reviewers and evidence stakeholders. Despite these limitations, the now universal recognition of the importance of assessing quality in judging evidence will ensure the continued push towards more research, greater uptake, and ultimately higher quality.

We hope you follow along with this series in the upcoming months and gain insights that will enhance your work and use of clinical evidence.

References

- Guyatt G, Busse J. Methods Commentary: Risk of Bias in Randomized Trials 1. Available at https://distillercer.com/resources/methodological-resources/risk-of-bias-commentary/. Accessed April 15, 2016.

- GRADE working group. Available at http://www.gradeworkinggroup.org/. Accessed April 18, 2016.

- Guyatt GH, Oxman AD, Vist GE, Kunz R, Falck-Ytter Y, Alonso-Coello P, Schünemann HJ, for the GRADE Working Group. GRADE: an emerging consensus on rating quality of evidence and strength of recommendations. BMJ 2008;336:924-926.

- Higgins JPT, Altman DG, Sterne JAC (editors) on behalf of the Cochrane Statistical Methods Group and the Cochrane Bias Methods Group. Chapter 8: Assessing Risk of Bias in Included Studies. In Higgins JPT and Green S (editors), Cochrane Handbook for Systematic Reviews of Interventions Version 5.1.0 [updated March 2011]. The Cochrane Collaboration, 2011. Available at http://handbook.cochrane.org/. Accessed April 18, 2016.

- Sterne JAC, Hernán MA, Reeves BC, Savović J, Berkman ND, Viswanathan M, Henry D, Altman DG, Ansari MT, Boutron I, Carpenter J, Chan AW, Churchill R, Hróbjartsson A, Kirkham J, Jüni P, Loke Y, Pigott T, Ramsay C, Regidor D, Rothstein H, Sandhu L, Santaguida P, Schünemann HJ, Shea B, Shrier I, Tugwell P, Turner L, Valentine JC, Waddington H, Waters E, Whiting P, Higgins JPT. The ROBINS-I tool (Risk Of Bias In Non-randomized Studies - of Interventions) Version 7 [updated March 2016]. The Cochrane Collaboration, 2011. Available at https://sites.google.com/site/riskofbiastool/. Accessed April 18, 2016.